Super Micro Gold Series Servers

1U Hyper SYS-112H-TN

In-Memory Database for AI Inferencing

Key Applications

Virtualization

Scale Out All-Flash NVMe Storage

Cloud Computing

CDN/vCDN/Cloud CDN

Data Center Enterprise Applications

AI Inference

Financial

-

Hyper is a flagship performance rackmount server optimized for scale-out cloud workloads.

Single Intel® Xeon® 6 Processor.

16 DIMM slots support RDIMM / MRDIMM(1DPC)

Double width add-on card support.

Flexible networking options with 1 AIOM networking slot (OCP NIC 3.0 compatible).

Breeze through high throughput workloads with PCIe 5.0 NVMe drive support.

Trusted Platform Module (TPM) 2.0 onboard.

Modularized and Tool-less design for easy serviceability.

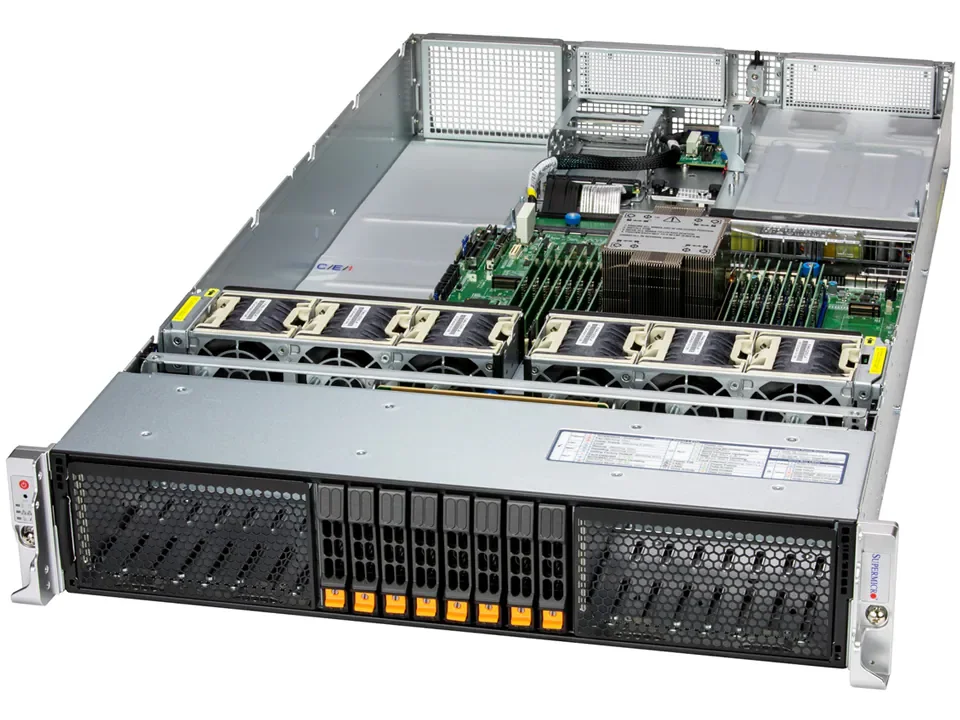

2U Hyper SYS-212H-TN

Key Applications

Virtualization

AI Inference & Machine Learning

Database Processing and High Density Storage

Software-defined Storage

Cloud Computing

Data Center Optimized

Enterprise Server

-

Hyper is a flagship performance rackmount server designed for scale-out cloud workloads.

Single Intel® Xeon® 6 Processor.

16 DIMM slots support RDIMM / MRDIMM(1DPC)

Double width add-on card support.

Flexible networking options with 1 AIOM networking slot (OCP NIC 3.0 compatible).

Breeze through high throughput workloads with PCIe 5.0 NVMe drive support.

Trusted Platform Module (TPM) 2.0 onboard.

Modularized and Tool-less design for easy serviceability.

1U CloudDC A+ AS-1115CS-TNR

2U 4-Node GrandTwin AS-2115GT-HNTR

Compute/Metadata Node for AI Storage Cluster

Key Applications

Web Server, Firewall Application

Cloud Computing, Compact Server

CDN, Edge Nodes

Data Center Optimized, Value IaaS

DNS & Gateway Servers, Firewall Application

-

Single AMD EPYC™ 9004/9005* Series Processor. (*AMD EPYC™ 9005 series drop-in support requires board revision 2.x)

Support up to DDR5 12 DIMM slots (1DPC), speed up to 6400MT/s. (Please check System Memory Section for detail)

2 PCIe 5.0 x16 FHHL

Dual AIOM (OCP 3.0) for networking, 1 dedicated IPMI LAN

10 Hot-swap 2.5" SATA drive bays. Optional 10 Hot-swap NVMe/SAS drives support via additional parts. 2 M.2 PCIe 3.0 x2 NVMe slots

Dual 860W Redundant Platinum Level power supplies. (Power supply full redundancy based on configuration and application load)

2U 2-GPU Enterprise AI SYS-212GB-FNR

4U 4-GPU Enterprise AI SYS-422GA-NRT

PaaS - Agent Flow AI Interface

SaaS - Agent Flow AI Interface

Web Application/Storage Server

Key Applications

High Performance Computing

Cloud Gaming

Telco Edge

Multi-Access Edge Computing (MEC)

Multi-Purpose CDN

High Availability Cache Cluster

Electric Design Automation (EDA)

Mission Critical Web Applications

-

Four hot-pluggable systems (nodes) in a 2U form factor. Each node supports the following:;

Single AMD EPYC™ 9004/9005* Series Processors (*AMD EPYC™ 9005 series drop-in support requires board revision 2.x);

Supports up to DDR5 12 DIMM slots (1DPC), speed up to 6400 MT/s (Please check System Memory Section for detail);

2 AIOM / OCP 3.0 slots;

Total of 6 Hot-swap 2.5"NVMe/SATA drives;

Dual 2200W Redundant Titanium Level power supplies (Power supply full redundancy based on configuration and application load)

In-Memory Database for AI Inferencing

Key Applications

Scientific Research

High Performance Computing (HPC)

Financial Services

Big Data Analytics/Content Repository

Enterprise Server

iSCSI SAN

HPC

Data Center

Data Processing & Storage

-

Single Socket E2 (LGA-4710) Intel® Xeon® 6700-series processors with P-cores up to 350W with air cooling

Support for up to 4 double-width PCIe GPU accelerator cards

Up to 16 DIMMs supporting up to 1TB DDR5-6400 in 1DPC or 2TB DDR5-5200 in 2DPC or 512GB DDR5-8000 in 1DPC

Up to 4 PCIe 5.0 x16 FHFL double-width + 3 PCIe 5.0 x16 FHFL slots

Up to 4 front hot-swap E1.S NVMe drive bays

Two 2700W Titanium Level power supplies

Key Applications

High Performance Computing (HPC)

AI/Deep Learning Training

Cloud Gaming

Design & Visualization

3D Rendering

Model Analysis

Research Lab

Edge AI

Key Applications

Corporate Database

Appliance Optimized Storage Building Blocks

-

Single Socket SP5 AMD EPYC™ 9004/9005 Processor; up to 290W TDP. (*AMD EPYC™ 9005 series drop-in support requires board revision 2.x)

Support up to DDR5 12 DIMM slots (1DPC)

Dedicated 2 PCIe 5.0 x16 AIOM slots (OCP 3.0 SFF compliant). Up to 6 PCIe 5.0 slots (slot #2 populated by 3808 controller)

Server remote management: IPMI 2.0 / KVM over LAN / Media over LAN per node

24 Hot-swap 3.5" SAS3/SATA3 drives, 2 Rear SATA slots, 4 Optional rear NVMe slots, 2 NVMe M.2 slots (form factor: 2280/22110)

4x 8cm hot-swap counter-rotate redundant PWM cooling fans

1600W Redundant Power Supplies Titanium Level (96%)

HBA Controller support via Broadcom® 3808

Diagnostic Imaging

VDI

Media/Video Streaming

Animation & Modeling

-

Dual Socket BR (LGA-7529) Intel® Xeon® 6900 series processors with P-cores up to 500W with air cooling

Support up to 8 double-width 600W PCIe GPU accelerator cards at 35ºC. Support 8 NVIDIA RTX PRO 6000 Blackwell Server Edition+ 5 NVIDIA BlueField-3 at 35ºC

Up to 24 DIMMs supporting up to 6TB DDR5-6400 in 1DPC or 6TB DDR5-8800 in 1DPC

Flexible networking with up to AIOM slots

Up to 13 PCIe 5.0 x16 FHFL slots

2U Simply Double Enterprise Storage ASG-2015S-E1CR24L

Supermicro GPU Servers

GPU SuperServer SYS-821GE-TNHR

DP Intel 8U System with NVIDIA HGX H100/H200 8-GPU and Rear I/O

High Performance Computing

AI/Deep Learning Training

Industrial Automation, Retail

Healthcare

Conversational AI

Key Applications

Business Intelligence & Analytics

Drug Discovery

Climate and Weather Modeling

Finance & Economics

-

5th/4th Gen Intel® Xeon® Scalable processor support

Support for NVIDIA HGX™ H100/H200 8-GPU

32 DIMM slots Up to 8TB: 32x 256 GB DRAM Memory Type: 5600MTs ECC DDR5

8 PCIe Gen 5.0 X16 LP

2 PCIe Gen 5.0 X16 FHHL Slots, 2 PCIe Gen 5.0 X16 FHHL Slots (optional)

Flexible networking options

2 M.2 NVMe for boot drive only

16x 2.5" Hot-swap NVMe drive bays (12x by default, 4x optional)

3x 2.5" Hot-swap SATA drive bays

Optional: 8x 2.5" Hot-swap SATA drive bays

10 heavy duty fans with optimal fan speed control

Optional: 8x 3000W (4+4) Redundant Power Supplies, Titanium Level

6x 3000W (4+2) Redundant Power Supplies, Titanium Level

AI Training SuperServer SYS-421GE-TNHR2-LCC

Scientific Research

Business Intelligence & Analytics

Finance Services & Fraud Detection

Large Language Model (LLM) & Generative AI

Conversational AI

Key Applications

Drug Discovery

AI/Deep Learning Training & Inference

High Performance Computing (HPC)

Autonomous Vehicle Technologies

-

Complete liquid-cooled rack integration is required for purchase

5th/4th Gen Intel® Xeon® Scalable processor support

8 NVIDIA H200/H100 GPUs

32 DIMM slots, ECC RDIMM DDR5 up to 4TB 5600MT/s (1DPC) or up to 8TB 4400MT/s (2DPC)

8 PCIe 5.0 x16 LP, 2x PCIe 5.0 x16 FHHL slots

8 front hot-swap 2.5" NVMe U.2, 2x front hot-swap NVMe M.2

4 Redundant 52500W Titanium Level power supplies

2 10GbE RJ-45, 1x Dedicated BMC/IPMI

GPU SuperServer SYS-521GE-TNRT

DP Intel 5U Dual-Root PCIe GPU System with up to 10 GPUs and extended thermal capacity

High Performance Computing

AI/Deep Learning Training

Cloud Gaming

Design & Virtualization

Diagnostic Imaging

Key Applications

VDI

Media/Video Streaming

Animation & Modeling

3D Rendering

-

5th/4th Gen Intel® Xeon® Scalable processor support

32 DIMM slots Up to 8TB: 32x 256 GB DRAM Memory Type: 5600MTs ECC DDR5

AIOM/OCP 3.0 Support

13 PCIe Gen 5.0 X16 FHFL Slots8x HOT SWAP 2.5” SATA/SAS (AOC required)

8x 2.5" Hot-swap SATA drive bays

8x2.5" Hot-swap NVMe drive bays10 Hot-Swap Heavy Duty Fans with Optimal Fan Speed Control

GPU SuperServer SYS-421GE-TNRT

DP Intel 4U Dual-Root PCIe GPU System with up to 10 GPUs

High Performance Computing

AI/Deep Learning Training

Cloud Gaming

Design & Virtualization

Diagnostic Imaging

Key Applications

VDI

Media/Video Streaming

Animation & Modeling

3D Rendering

-

High density 1U GPU system with Integrated NVIDIA® H100 GPU

NVIDIA Grace Hopper™ Superchip (Grace CPU and H100 GPU)

NVLink® Chip-2-Chip (C2C) high-bandwidth, low-latency interconnect between CPU and GPU at 900GB/s

Up to 576GB of coherent memory per node including 480GB LPDDR5X and 96GB of HBM3 for LLM applications

2x PCIe 5.0 x16 slots supporting NVIDIA BlueField®-3 or ConnectX®-7

9 Hot-Swap Heavy Duty Fans with Optimal Fan Speed Control

This system supports two E1.S drives directly from the processor only.

GPU ARS-111GL-NHR

NVIDIA GH200 Grace Hopper Superchip system supporting NVIDIA BlueField-3 or NVIDIA ConnectX-7

High Performance Computing

Large Language Model (LLM) & Generative AI

Key Applications

AI/Deep Learning Training & Inference

-

5th/4th Gen Intel® Xeon® Scalable processor support

32 DIMM slots Up to 8TB: 32x 256 GB DRAM Memory Type: 5600MTs ECC DDR5

AIOM/OCP 3.0 Support

13 PCIe Gen 5.0 X16 FHFL Slots

8x HOT SWAP 2.5” SATA/SAS (AOC required)

8x 2.5" Hot-swap SATA drive bays

8x2.5" Hot-swap NVMe drive bays

8 Hot-Swap Heavy Duty Fans with Optimal Fan Speed Control

4x 2700W (2+2) Redundant Power Supplies, Titanium Level

GPU ARS-111GL-DNHR-LCC

1U 2-Node NVIDIA GH200 Grace Hopper Superchip system with liquid-cooling supporting NVIDIA BlueField-3 or NVIDIA ConnectX-7

High Performance Computing

Large Language Model (LLM) & Generative AI

AI/Deep Learning Training & Inference

-

High density 1U 2-node GPU system with Integrated NVIDIA® H100 GPU (1 per Node)

NVIDIA Grace Hopper™ Superchip (Grace CPU and H100 GPU)

NVLink® Chip-2-Chip (C2C) high-bandwidth, low-latency interconnect between CPU and GPU at 900GB/s

Up to 576GB of coherent memory per node including 480GB LPDDR5X and 96GB of HBM3 for LLM applications

2x PCIe 5.0 x16 slots per node supporting NVIDIA BlueField®-3 or ConnectX®-7

7 Hot-Swap Heavy Duty Fans with Optimal Fan Speed Control

This system supports two E1.S drives directly from the processor only.